The Moral Machine: Why Our Inner Luddite Deserves a Seat at the Table

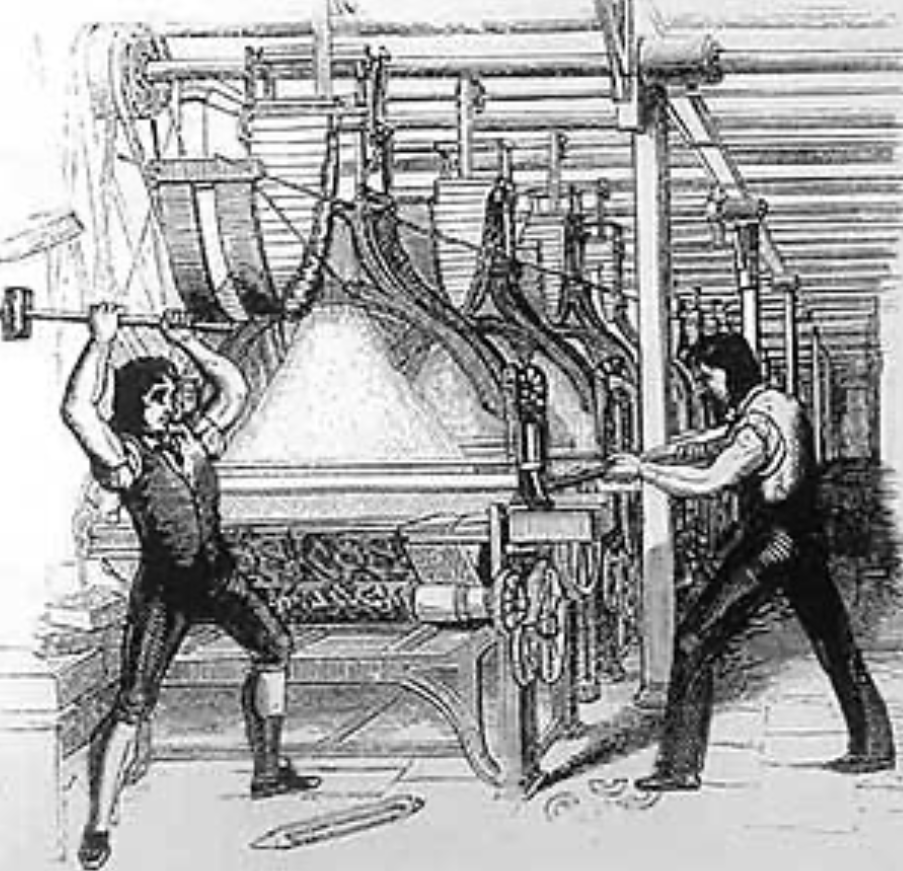

An early 19th century engraving showing frame-breaking in progress by the Luddite’s of Nottinghamshire.

"Act only according to that maxim by which you can at the same time will that it should become a universal law."

- Immanuel Kant, Groundwork of the Metaphysics of Morals, 1785

In Defence of the Luddites

The term ‘Luddite’ has become, in contemporary usage, shorthand for irrational resistance to progress; something of a caricature so persistent that it has almost entirely obscured the actual historical movement from view. In fact, the Luddite uprisings of 1811-1816 were not the actions of people who feared technology as such. They were skilled textile workers, highly trained, proud of their craft, and fully aware of the implications of what was happening. They were making a moral and economic argument: that the introduction of labour-saving machinery was being used not to improve their lives, but to destroy them, while enriching those who already held power. They were not wrong. And they were suppressed with considerable violence.

I’ve been thinking of the Luddites recently, when I read discussions about artificial intelligence, not because I wish to smash my computer, but because I notice how quickly my 'inner Luddite' - that voice of principled resistance within many of us - is dismissed as reactionary sentiment, unworthy of serious engagement. On the contrary. In my view, the philosophical frameworks available to us demand that we listen to that inner voice more carefully than we currently do.

[Context: The Historical Luddites] Between 1811 and 1816, groups of English textile workers known as Luddites engaged in organised machine-breaking as a form of industrial protest. They were not opposed to technology per se, but to the specific economic conditions under which it was being deployed, which destroyed skilled crafts and concentrated wealth. The movement was met with military suppression; frame-breaking was made a capital offence. The term 'Luddite' only acquired its dismissive modern connotation decades later.

Two Philosophies, One Machine

The standard defence of AI adoption is broadly consequentialist if the aggregate outcomes of developing and deploying artificial intelligence are positive; if lives are saved, access to knowledge is democratised, diseases are cured, creativity is amplified, then the moral case for development is compelling, whatever individual dislocations are involved. A utilitarian position, descending from Jeremy Bentham and John Stuart Mill: the moral worth of an action is determined by its consequences, specifically by its capacity to produce the greatest good for the greatest number.

It is a valid stance, but it also has a well-documented weakness: it tends to make the suffering of the minority - whose skills are destroyed, whose economic position is obliterated, whose sense of meaning is erased by automation - invisible, as long as the aggregate sum is positive. The textile workers who lost everything were a minority of the population. The utilitarian calculus did not require their grief to appear on the balance sheet.

[Context: Deontological Ethics (Kant)] In contrast to consequentialism, deontological ethics, most rigorously developed by Immanuel Kant (1724-1804), holds that the moral worth of an action is intrinsic, not dependent on its outcomes. We have duties to treat people as ends in themselves rather than merely as means, to act only on principles we would be willing to universalise. For Kant, lying was wrong even if it produced good consequences, because a world in which everyone lied when convenient would be logically self-defeating. Applied to AI: the question is not only whether AI produces good outcomes, but whether the process of its development and deployment respects human dignity, truthfulness, and autonomy as inherent values.

The Deontological Hearing

The Kantian deontologist asks a different set of questions. Not 'what are the aggregate outcomes?' but 'what duties do we have, and are they being honoured?' Kant's imperative demands that we act only on principles we could universalise, and that we apply to every AI deployment. If every organisation, every government, every individual, did this, would the world it produced be one in which human dignity was preserved?

Such a question produces significant discomfort. If every company replaced its entry-level creative workers with AI, we would eliminate the conditions under which the next generation of expert practitioners develops. If every student used AI to complete their writing, we would gradually hollow out the very cognitive capacities that make original writing possible in the first place. If every relationship that required emotional support first reached out to a chatbot, we would slowly be educating ourselves out of the difficult, irreplaceable practice of attending to one another. These are not hypothetical concerns. They are current conditions. And the deontologist, the part of us that insists some things matter in themselves, regardless of efficiency, is right to be alarmed.

In Conclusion

The unexamined adoption of AI is not neutral. It is a moral choice. It is one we are making, mostly, without noticing that we are doing so. The moral imperative of our moment is not to reject AI. Rejection, at this point, is not available. The tide is simply too far in. Nor is it to adopt AI uncritically, at the pace set by those who profit from its acceleration. The moral imperative is discernment: the sustained, effortful practice of asking what we are choosing and why, and whose voices are being heard in that choosing. Our inner Luddite is not the enemy of progress. It is the part of us that remembers that progress is a means, not an end, and that insists we periodically ask what exactly we are progressing toward. It deserves, at the very least, a seat at the table. I suggest we stop ejecting it from the room.