The Machine That Wasn't There: James Tilly Matthews and the Hallucinating Mind

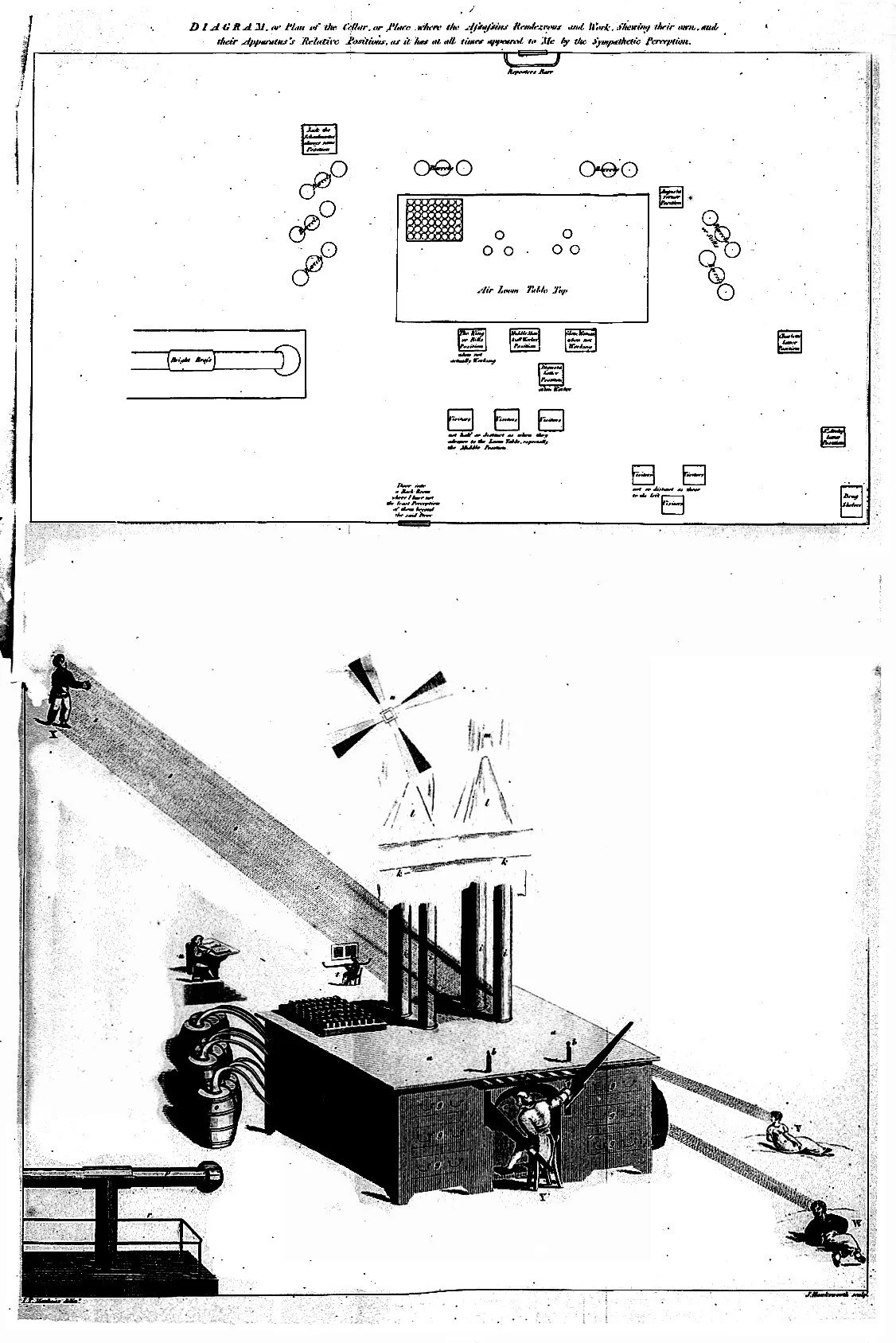

James Tilly Matthews' original diagram of the Air Loom, reproduced in Haslam's Illustrations of Madness, (1810).

Around 1797, London tea merchant James Tilly Matthews became convinced that criminals were operating a pneumatic machine from a cellar near Bethlem Royal Hospital. He called it the Air Loom, believing it could weave magnetic fluids through the air into the nervous system, distorting thought, speech, and sensation. Matthews named its operators, catalogued their procedures with precision, and drew the device in meticulous technical detail: fluid reservoirs, brass retorts, silk threads, silent and invisible reach. The drawings are extraordinary.

[Context: James Tilly Matthews and the Air Loom] Matthews (1770–1815) was a London tea merchant and political activist who was committed to Bethlem Royal Hospital in 1797. His case was documented by the hospital's apothecary, John Haslam, in ‘Illustrations of Madness’ (1810), one of the earliest published psychiatric case studies. Matthews believed a gang of spies operated a device called the ‘Air Loom’, which could perform named procedures on the human mind, including "event-working," "thought-making," and "lobster-cracking." He produced detailed technical drawings of the machine, reproduced in Haslam's book.

Matthews had never seen the Air Loom. It did not exist. Yet in his mind, it existed in vivid detail, a complete, named, purposeful system entirely of his own imagining. I first came across Mathews’ Air Loom some time ago, but recently it has been coming to mind more often, specifically in relation to artificial intelligence and its potential to hallucinate; the tendency of large language models (LLM’s) to generate confident, fluent, internally consistent information that bears no relationship to reality.

The Most Coherent Machine in the History of Madness

What makes the Air Loom compelling is not merely that Matthews believed in it, but that what he imagined was so coherent. The machine had logic, named parts - silk threads, receptacles for 'seminal fluid of assassination' - and named operators: 'Bill the King,' 'the Glove Woman,' 'Sir Archy.' Each procedure produced effects that Matthews could describe, distinguish, and locate. The system was not random or fragmented. Structurally, it was a theory: a model that explained his experience with internal consistency, and a grim elegance.

This is what so many accounts of psychosis miss: not the departure from reality, but the intense constructive labour involved. The mind, unable to account for what it is experiencing through available rational frameworks, builds one. It does not generate noise. It generates meaning. The Air Loom is not the symptom of a broken mind; it is the product of a mind working at full capacity, just on the wrong problem.

What AI Means When It Doesn't Know What It Means

The word 'hallucination' is itself anthropomorphic. We borrow the language of inner experience to describe what is, technically, a statistical output. A language model does not see what is not there; it has no inner world for apparitions. It generates text based on patterns learned from vast human language, producing confident, detailed, fictitious accounts, dressed in the fluent syntax of authority.

[Context: AI Hallucination] In machine learning, "hallucination" describes the tendency of large language models to generate text that is grammatically fluent, contextually plausible, and entirely false. Unlike a human lie, the model does not know it is wrong; unlike a human error, it is not the result of misremembering. The model generates the most statistically probable continuation of a given prompt, and probability, it turns out, has no necessary relationship to truth.

Both artificial intelligence and Matthews' Air Loom generate coherent narratives untethered from external reality. But a deeper parallel is more arresting: both expose a fundamental aspect of the relationship between structure and truth. We are disposed to trust coherence. When something holds together - when its internal logic is consistent, its details precise, its tone assured - we extend it the credit of believability. The Air Loom earned that credit solely from Matthews. AI hallucinations are a collective debt we have taken on.

Haslam, documenting Matthews' case, did not mock his system. He knew the delusion's form was diagnostic, revealing how minds work. We may need similar attentiveness toward AI hallucinations, not just as bugs, but as structural insights into generating meaning without grounding in experience.

The Question of Experience

Matthews suffered his Air Loom. The machine was not a curiosity to him, nor a theory he held at arm's length: it pressed into his chest, interrupted his speech, and manufactured sensations in his body that he could not escape. Whatever the epistemological status of the Air Loom, its phenomenological reality was total. The gap between his inner world and the outer one was a source of genuine torment, and that torment was as real as anything can be.

An AI model does not suffer from hallucinations. There is no distress on the other side of the generated text, no experience of “wrongness”, no body that registers the gap between output and the wider world. And this asymmetry matters. Matthews was a mind trapped in a narrative it had constructed, but could not exit. The language model is a narrative-generating system with no interior in which entrapment could occur. One is a prisoner; the other is a printing press that occasionally prints fiction in the typography of fact.

Both cases force a question: what is the link between a statement's structure and its truth? We assume well-formed language signals engagement with the world. The Air Loom and hallucinating models challenge this. One is full of experience, one has none; both generate coherent unreal worlds.

Matthews eventually found a kind of peace. Transferred to a private asylum in Hackney, he built physical models of the Air Loom, three-dimensional, handcrafted, and careful. There is something unbearably poignant in this: a man transforming his persecutory vision into objects he could hold, examine, and set down. The machine that had colonised his mind became, at last, something he could put on a shelf.

I don't know what the equivalent gesture would look like for AI. Perhaps it begins with the kind of attentiveness we bring to the history of a man like Matthews: taking the structure of the confabulation seriously, not merely dismissing it, and asking what the pattern of error tells us about the architecture of the system producing it. Perhaps this is a challenge, a gauntlet laid down to an artist stumbling upon my words: what does the face, body and substance of AI look like? What might its anthropomorphised presence look or feel like?

In Conclusion

What the Air Loom offers, is a model of what it looks like when coherence and truth come apart. Matthews' tragedy was not that he was irrational. It was that he was, by his own internal lights, entirely rational, and that this was precisely insufficient. What troubles me about AI hallucination isn't that machines make mistakes; that's survivable, correctable, and engineering's domain. What troubles me is that the wrongness looks like rightness. The fluency is identical. The confidence is indistinguishable. We're building systems that generate meaning at scale, yet we’re only beginning to grasp what it means to generate meaning without grounding in the world. Matthew was able to put his Air Loom on a shelf. Whether we will find a shelf for AI remains uncertain. But perhaps the first step is recognising what, exactly, we are looking at.